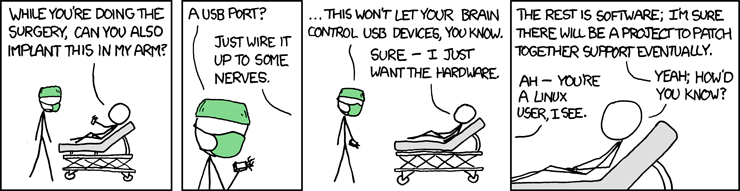

From XKCD last week:

This comic (aside from the nice dig at Linux) gets right at some of the core difficulties of treating the mind as a purely functional input/output device. Just imagine the huge technical challenge of connecting our nervous system to a relatively simple interface — USB. Not only do you have to treat the brain as a computer: you have to make it run the same protocols that we have on modern computers.

Biofeedback mechanisms might be suitable in theory, but if their history thus far is any guide, there are severe limitations on the bit rate they can achieve. Brains may seem like computers filled with information-dense electrical signals — but we have not yet figured out how to turn those signals into streams of data that are both dense and sufficiently well-defined to interface with a computer. For now, if you want to communicate with a computer, you’d have better results chucking baseballs at a keyboard than relying on any brain-scanning technology.

As for the comic’s joke about patching the “software” of the mind, half of the humor in it (the half that’s not about Linux) is in the meaninglessness of the proposition. It’s commonplace among strong-A.I. enthusiasts to describe the brain as hardware and the mind as software, but which part of the brain is the hardware, and how is the software encoded into it? Suppose you want to change that encoding to “patch” the mind. You could treat the software as encoded in the pattern of neurons and synapses; but then, assuming you know how to change that pattern precisely, what exactly do you change? What is the mapping from a neuron-and-synapse encoding to a high-level feature of the mind?

Perhaps neuroscientists will, in time, discover new compatibilities between minds and machines. But it is striking that our attempts so far to interface brains and computers have had to cope with the mind’s stubborn and peculiar patterns and organizations, rather than finding it like the computers we know — amenable to divisibility into distinct modules and submodules and sub-submodules that are subject to our direct, well-defined, and predictable control. We have found some success, that is, in attempting to make computers more like minds, but not much in treating minds as computers.

Futurisms

October 8, 2009